LiDAR: Apple LiDAR Analysis

Figure 1. Apple’s promotion of DTOF

This article tries to analyze Apple’s LiDAR sensor and the technology behind it. This article will cover five areas, namely dToF priciple, receiver (RX), illumination (TX), and system tradeoff.

1. dToF

First, Apple’s LiDAR sensor in iPad Pro and iPhone 12 is based on DTOF, the full name “Direct-Time-of-Flight” (Direct-Time-of-Flight). dToF is one of the 3D depth imaging solutions. DTOF projects the entire surface and calculates the depth information based on the reflection time. It has the characteristics of a long range . The advantage lies in applications that require a certain distance mapping, such as human action recognition, building recognition, scene recognition modeling, etc.

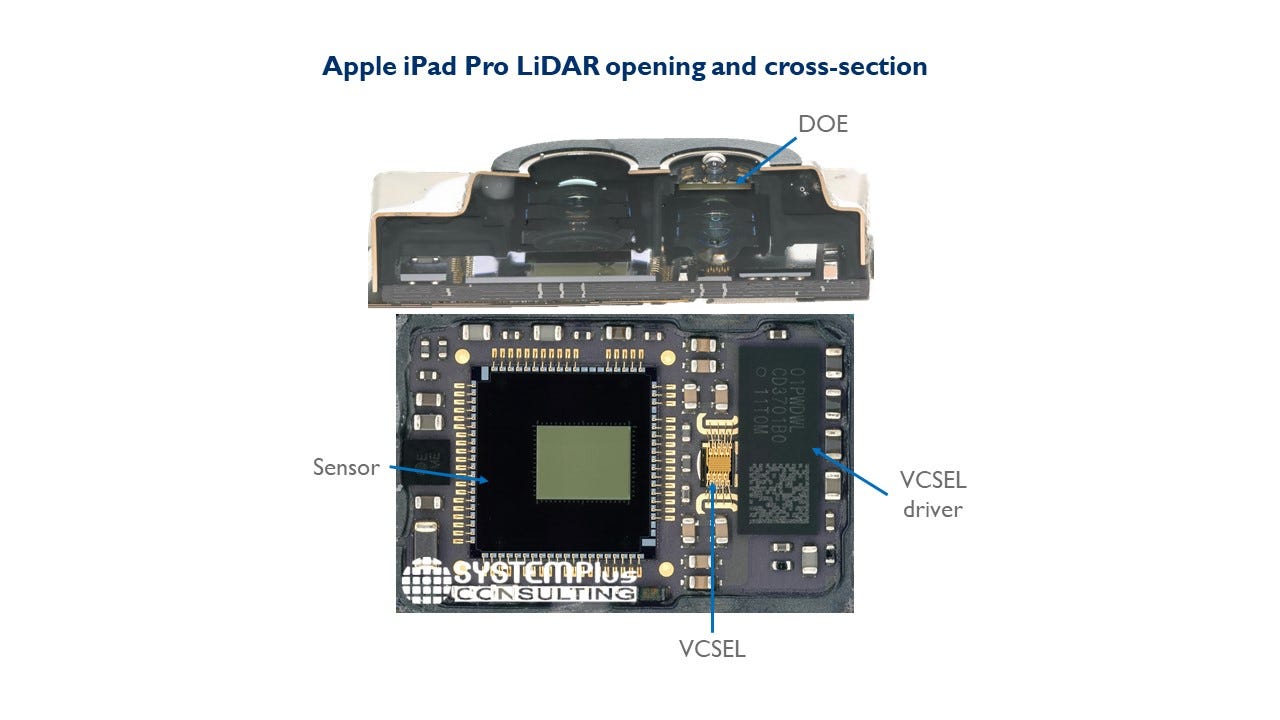

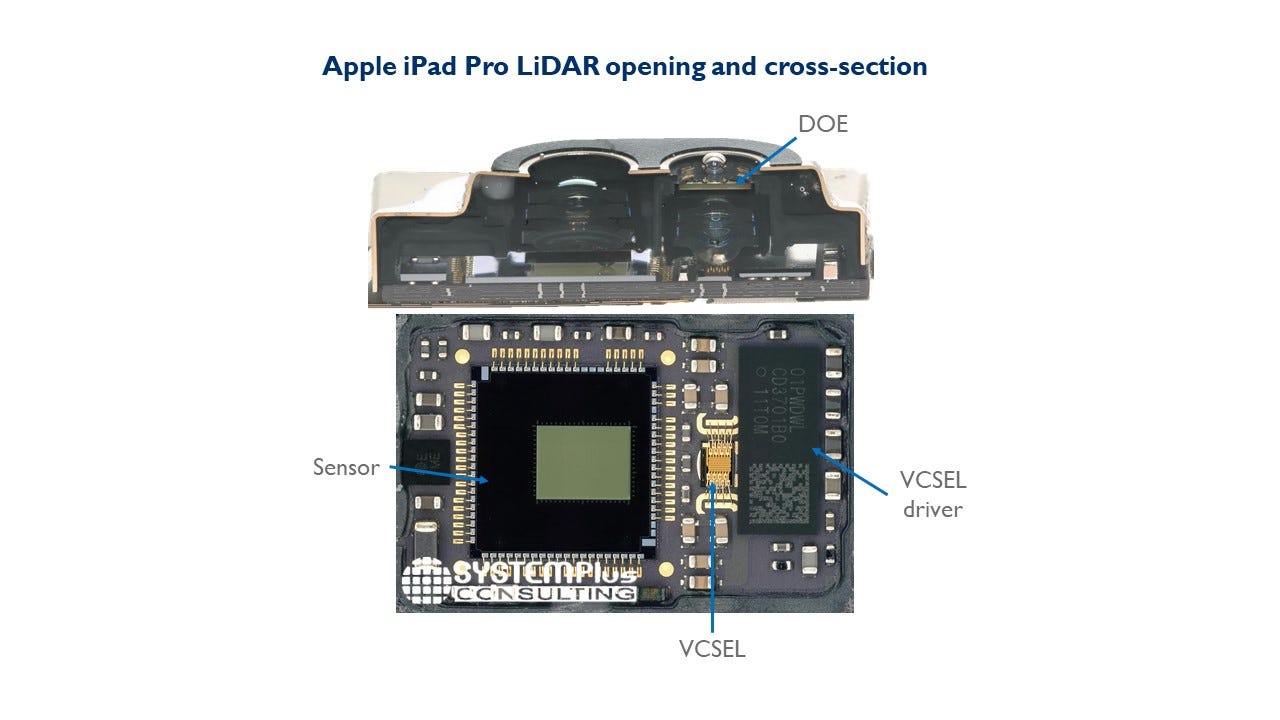

DTOF (Direct Time of Flight) is a kind of lidar that uses direct flight time to carry out distance measurement. The principle of distance measurement is to calculate the time for a light pulse to bounce and receive from the target, so as to find the target distance by the speed of light. The viewing angle of the DTOF on the iPad is approximately 60°×48°. In the case of a large field of view, the light will rapidly attenuate with distance. In order to ensure the detection distance, Apple uses DOE elements for dot matrix emission, and each dot matrix covers a range of about 1°×1°.

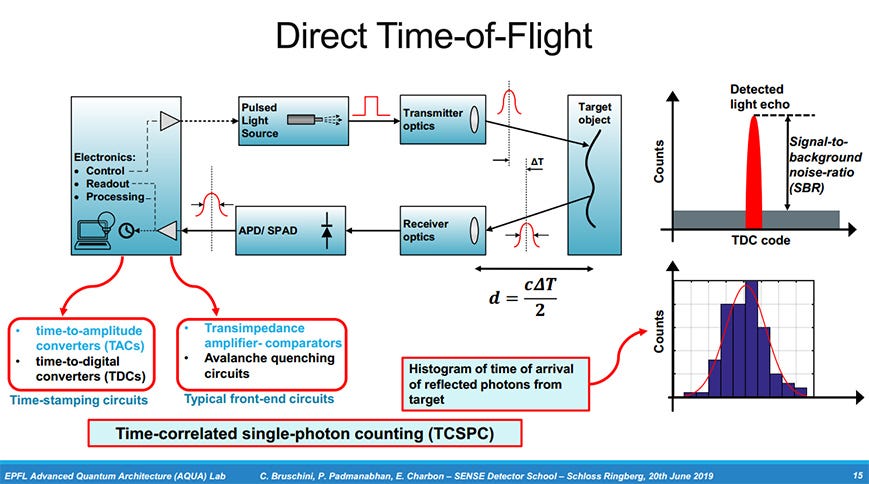

For DTOF ranging, the most important thing is to use multiple pulses to generate a histogram. Its working principle can be roughly explained in Figure 7 below:

DTOF ranging principle

The distance to the core is generated to generate a histogram of photon counts. The thickness of the histogram directly determines the accuracy of ranging. When the power of the light pulse is large, the generated histogram requires a small number of pulses, but the histogram is quite different from the original light intensity envelope. When the optical pulse power is small, although the number of optical pulses required to generate a histogram is large, the envelope depicted by the histogram is in good agreement with the envelope curve of the light intensity itself.

On this generation of DTOF sensor, its global frame rate is 30fps, and each frame contains 8 sub-frames. According to the previous four groups of light-emitting points, each group of light-emitting points is responsible for two sub-frames. In each sub-frame, there are many pulses with a pulse width of about 2–3 ns. Each sub-frame contains about 80,000 pulses, and about 4.8 million pulses are generated per second.

The number and power of pulses selected by Sony are still very controversial here. For SPAD, the number of signal photons in each pulse is as large as possible to ensure that each pulse has a greater probability of being detected. Moreover, the greater the number of pulses, the memory consumed for storing the histogram and the bandwidth consumed for transmitting the histogram will increase proportionally. This also puts great pressure on the data storage and transmission inside the chip. According to estimates, a data transmission rate of more than 10Gbit/s is currently required to completely transmit all signals.

2. Reciever (RX)

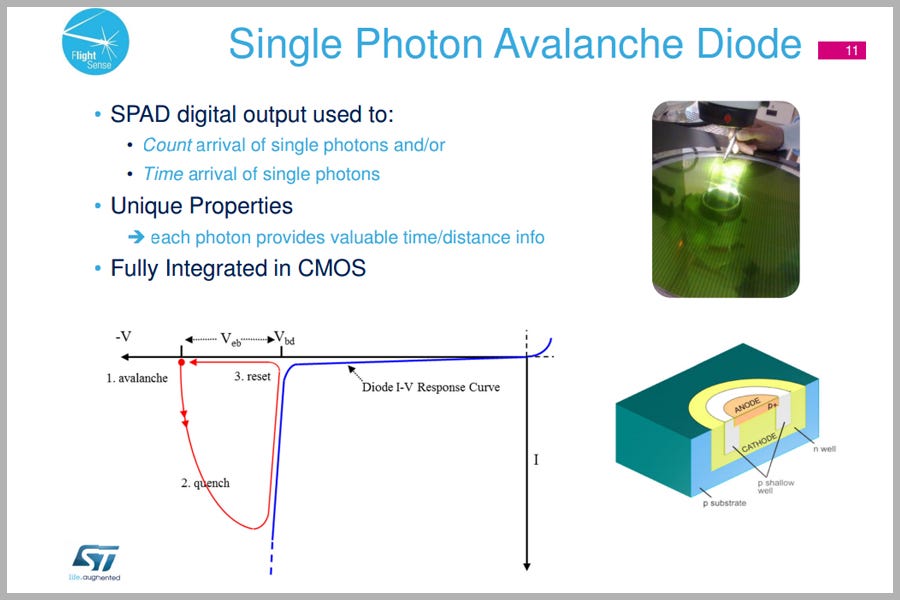

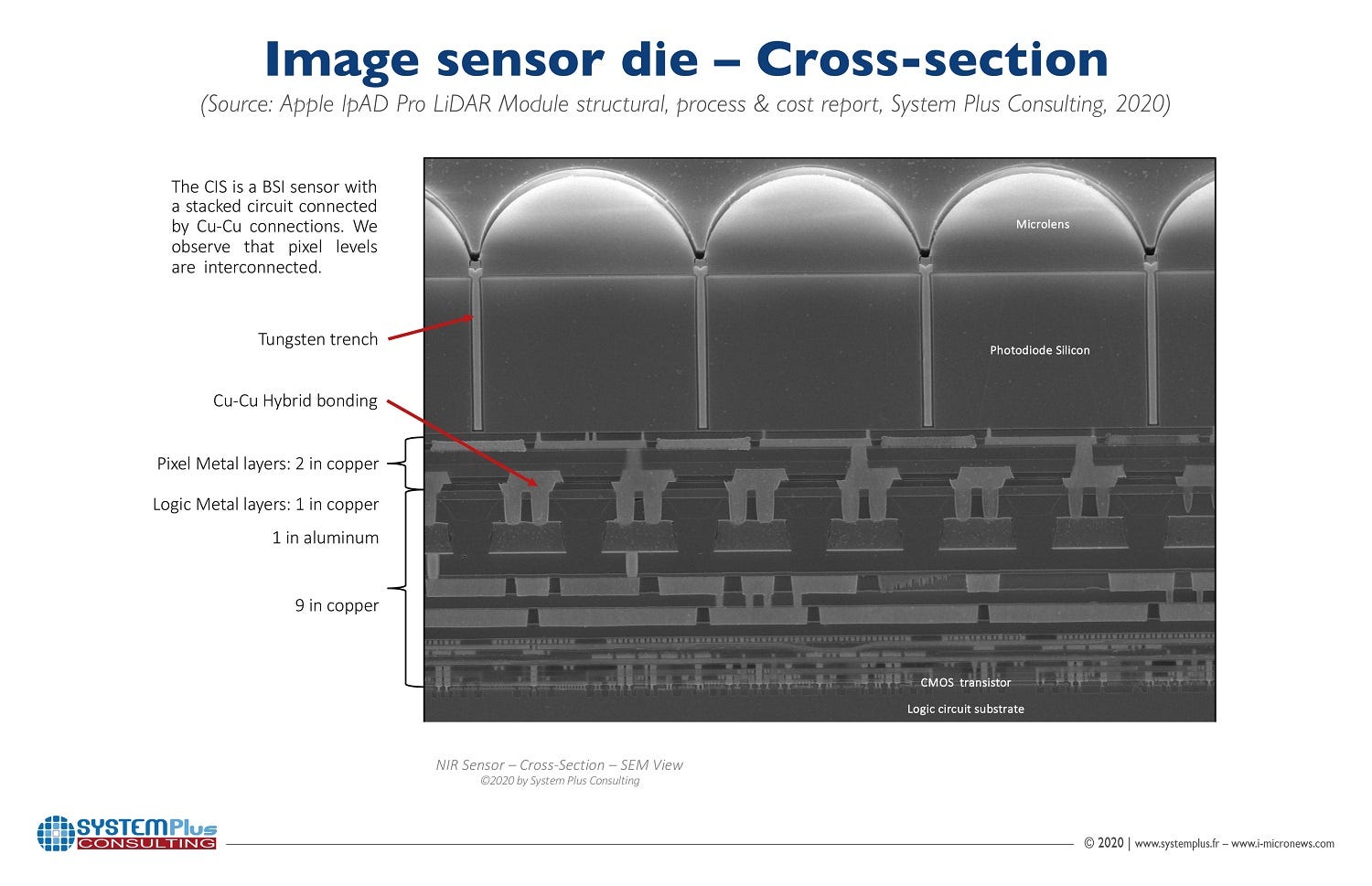

The Sony’s 30K resolution, 10µm pixel size sensor is indeed using dToF technology with a Single Photon Avalanche Diode (SPAD) array. The in-pixel connection is realized between the CIS and the logic wafer with hybrid bonding Direct Bonding Interconnect technology, which is the first time Sony is using 3D stacking for its ToF sensors. Deep trench isolation, trenches filled with metal, completely isolate the pixels.

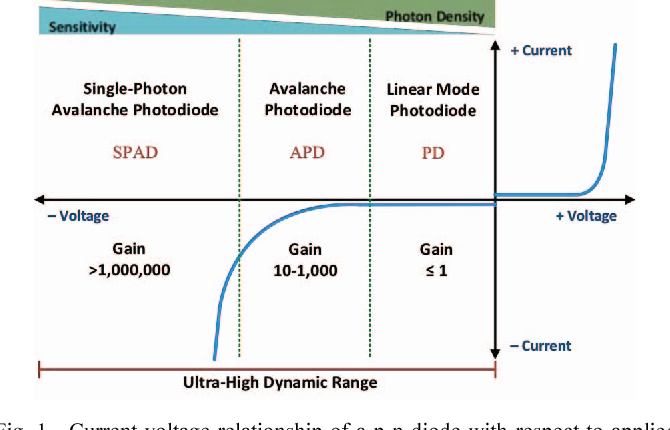

Figure 3 Current-voltage relationship of a p-n diode with respect to applied bias voltage. Linear (PD), avalanche (APD), and single-photon avalanche (SPAD) regions of operation provide increasing sensitivity and decreasing saturation threshold for detecting incident visible light.

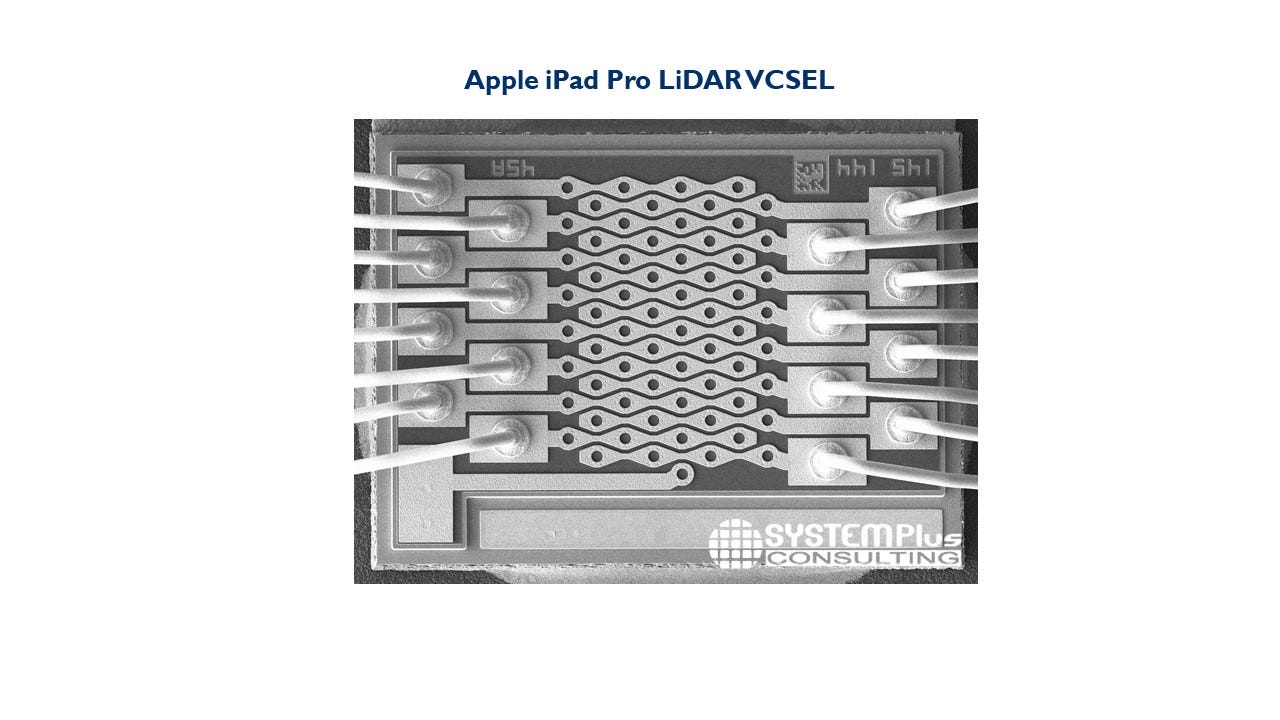

Apart from the CIS from Sony, the LiDAR is equipped with a VCSEL from Lumentum. The laser is designed to have multiple electrodes connected separately to the emitter array.

3. Illumination (TX)

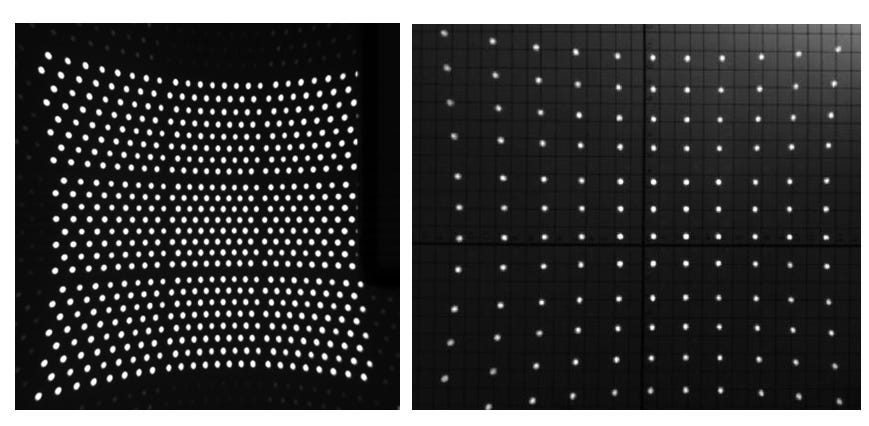

Figure 2. iPad DTOF transmission dot matrix

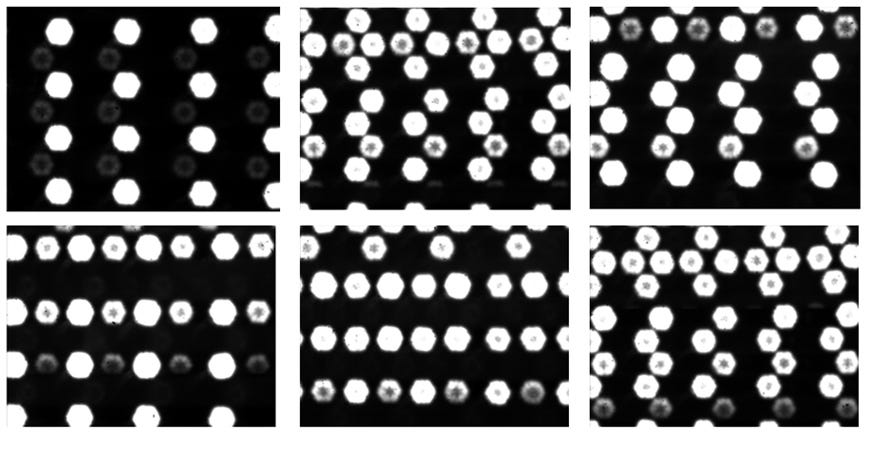

The DTOF of iPad is divided into two working modes, namely the normal working mode and the power-saving mode. In the above figure 2, the left side is the normal working mode with a total of 576 luminous points, while the right side is the power-saving working mode, with only 144 points in the Work simultaneously. And these points are not all illuminated at the same time. In the case of shooting with a high-speed camera, different luminous points emit light alternately, as shown in Figure below.

Figure 4. Instantaneous image of iPad DTOF launch dot matrix

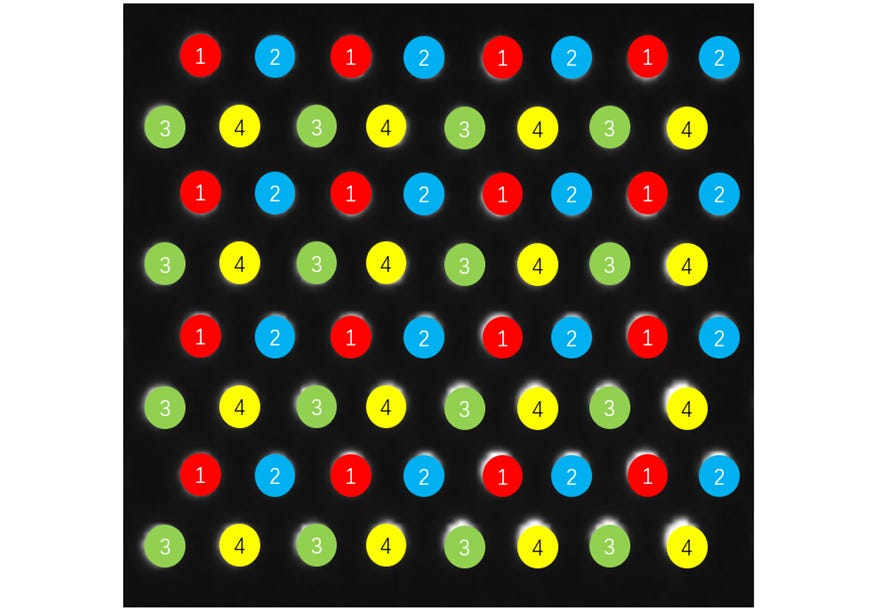

After testing, it is found that the luminous points are divided into four groups, which emit light in turns. Taking a unit as an example, the emission sequence is shown in the following figure:

Figure 5. iPad DTOF transmit dot matrix sequence diagram.

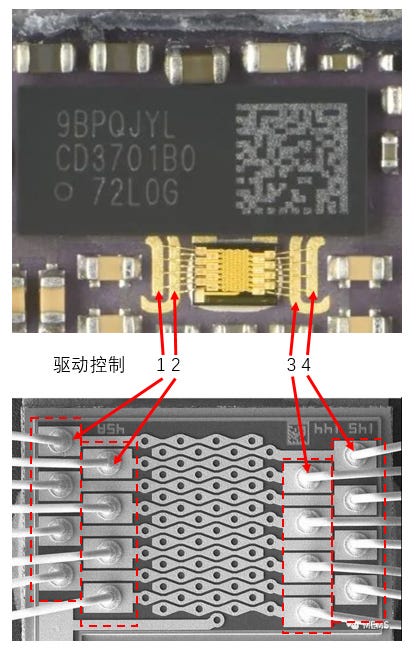

In the picture, we have marked different lighting sequences with different colors. We can see that in each row, the lighting sequence is 1–2–1–2 repeated or 3–4–3–4 repeated. This is also derived from the special VCSEL design. It is reported that this VCSEL is provided by Lumentum. The entire VCSEL adopts a common negative electrode design. The positive electrode is divided into four large regions, and the driving signal can control one of these four large regions. The structure diagram of the laser part is shown in Figure below:

Figure 6. iPad DTOF transmission dot matrix sequence diagram, (picture source network)

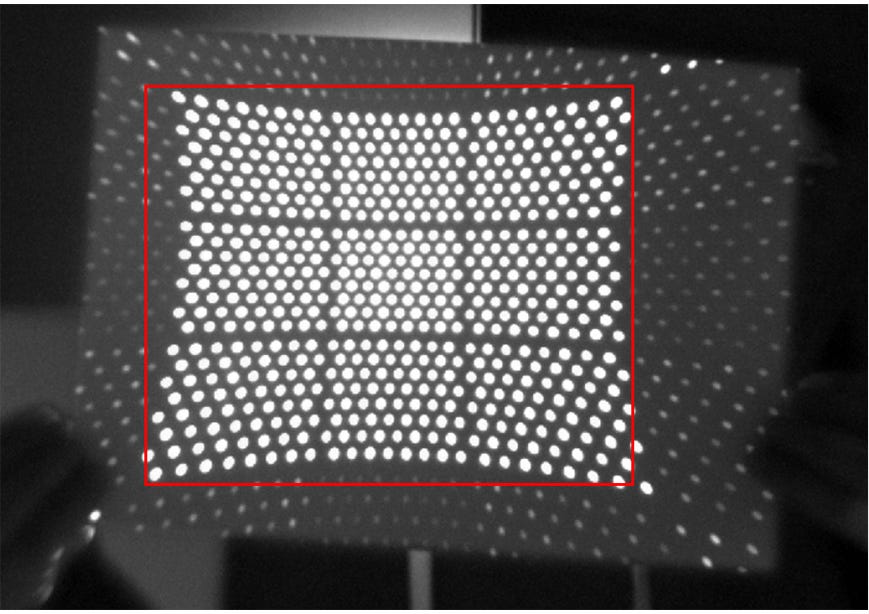

It can be seen from the figure that each row has 4 light spots, divided into 16 rows, divided into four groups, a total of 64 light spots. In reality, however, 576 spots appeared. This is because a DOE element is used at the light-emitting end, and ±1 order diffraction is generated in the three directions of up, down, and diagonal respectively, which expands one light-emitting point to nine. As can be seen from Figure below, except for the original 0-order and ±1-order diffraction in the red box, the rest of the light spots are the results of DOE element diffraction.

Figure 7. The lattice diffraction pattern of the iPad DTOF emission.

6. Software (SW) and Intellectual Property (IP)

Apple sensor fusion SW plays an important role in the overall 3D LiDAR depth sensing. Based on the test results, the Apple LiDAR can still output depth when the LiDAR sensor is covered. But the accuracy of the point cloud is poor. At the same time, when the camera is covered, the LiDAR sensor can output a depth map. But the point cloud density is poor. The fusion of camera, LiDAR, and other sensors such as Accel, Gyro, and Magnetometer provides an improved depth map. Apple’s LiDAR depth sensor has indeed achieved the best in class depth user experience.

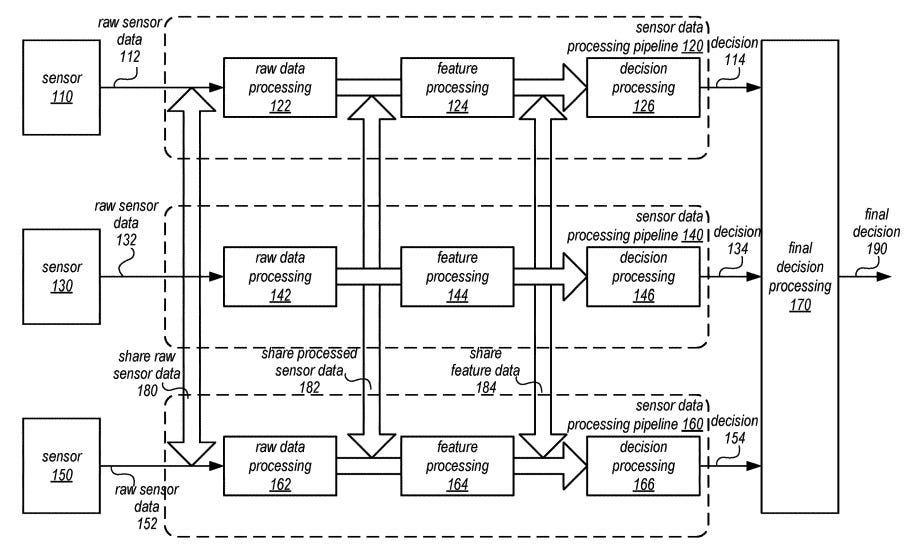

In a patent granted by the US Patent and Trademark Office titled “Shared sensor data across sensor processing pipelines,” Apple proposes multiple processes could be more collaborative with collected data. This patent can be used for LiDAR and autonomous vehicles.

A typical sensor data processing pipeline would, in its simplest terms, involve data collected by sensors that are provided to a dedicated processing system, which then can determine the situation and a relevant course of action that then sends to other systems. Generally, data from a sensor would be confined to just one pipeline, with no real influence from other systems.

In Apple’s proposal, pipelines could make a perception decision based on the data it has collected, with multiple pipelines capable of offering different decisions due to using different collections of sensors. While individual perceptions would be taken into account, Apple also proposes a fused perception decision could be made based on a combination of the two determined states.

A logical block diagram illustrating shared sensor data across processing pipelines.

Furthermore, Apple also suggests the data could be more granular, with decisions made and fused at different stages of each pipeline. This data, which can be shared between pipelines and can influence other processes, can include raw sensor data, processed sensor data, and even data derived from sensor data.

By offering more data points to work with, a control system will have more information to work with when creating a course of action, and may be able to make a more informed decision.

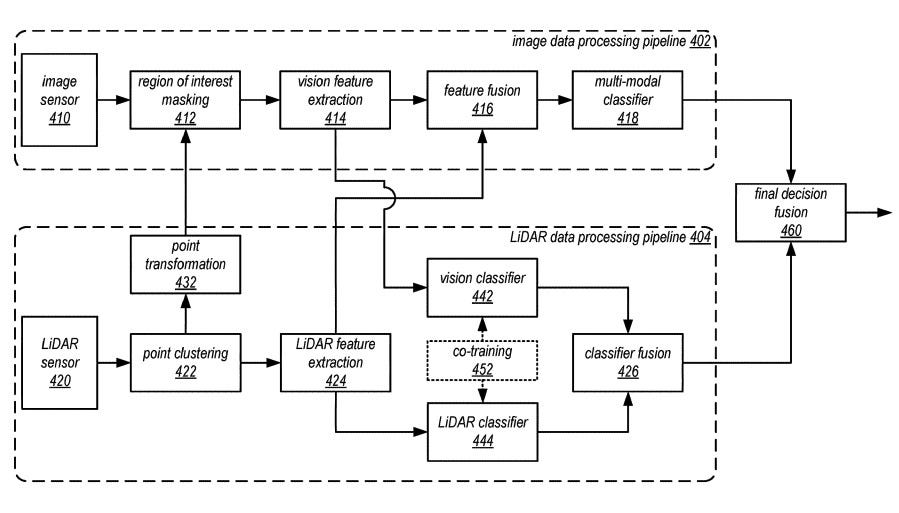

As part of the claims, Apple mentions how the pipelines could be for connected but different types of data, such as a first pipeline being for image sensor data while a second uses data from LiDAR.

Combining such data could allow for determinations that wouldn’t normally be possible with one set of data alone. For example, LiDAR data can determine distances and depths, but it cannot see color, something that may be important when trying to recognize objects on the road, and data it could acquire from an image sensor data processing pipeline.

Another logical block diagram, this time showing sharing between a LiDAR sensor pipeline and one for image processing.

Apple offers that the concept could be used with other types of sensor as well, including but not limited to infrared, radar, GPS, inertial, and angular rate sensors.

Credit:

- ABAX

- Yole

- ST

- Appleinsider